This is a retrospective covering some of the lessons learned from ‘Project Aurora’. This project involved the migration of 60 data analyst users’ objects from a legacy SQL Server database to a scalable, fit-for-purpose Aurora database.

As you will see in this post, getting started with AWS is very easy. However, migration requires a team effort. Data analyst users’ support and buy-in were crucial to the success of this project.

Growing pains

Community and communication

Design challenges

Testing and go live

The future

Growing pains

At Xero, analytics is growing at an incredibly fast rate. However, it hasn’t always been this way. As of 2015, there were four marketing analysts in Wellington and another four in London, San Francisco, and Melbourne. Data science wasn’t a hot job yet, and the data analyst role was primarily to support marketing automation by pulling lists for email campaigns.

Toward the end of 2017, the community had grown to around 20 analysts and cracks had begun to appear in the SQL Server environment. Every few months, the amount of data stored increased, and there was a need for faster processing.

At the time of migration, there were over 60 data analysts and scientists using the platform. They used it to do everything from sales reporting to analysing sentiment in customer tickets to tracking customer journeys.

Life with SQL Server

The SQL Server sandpit environment was designed for a much smaller workload, wasn’t intended to be a production environment, and became difficult to monitor, debug, and deliver a quality service.

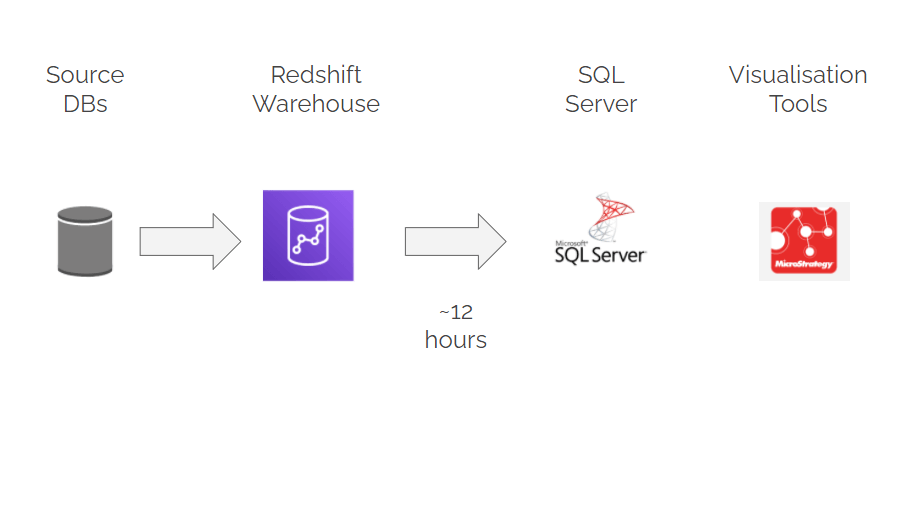

As data warehousing had been migrated to Amazon Redshift, we decided to use another AWS option to transform the analyst database from ‘state of emergency’ to ‘state of the art’.

We had reports of:

- Lack of visibility of when the SQL Server environment update would be completed and empty tables

- As a result of the update window, regional analysts lost valuable work time

- During peak times, multiple queries hit the same tables at the same time, resulting in database locking

Deciding on Aurora

Aurora provided the efficiencies we were looking for:

- It’s an entirely managed service that costs only 25% the cost of SQL Server licensing and storage

- Since it uses the same syntax as Redshift, copy times are shortened since they speak the same language

- The platform can adapt to growth as well. When you use AWS, scaling is as simple as pressing a button

The Aurora platform is a better choice for analysts as it’s 5x faster than SQL Server, 3x faster than standard Postgres, and queries run in seconds, not minutes.

Community and communication

We had to make sure there was a shared understanding between the project team and the analyst community before we could start solutioning a new environment.

Even within our own team, migrations had been complex, but this one was even more complex. Users were spread across four timezones, making it difficult to schedule meetings and keep communication flowing.

As a result of Xero’s expansion, new analysts were hired every month. Some with only Excel experience, while others are used to crunching through big data sets.

Our monthly workshops, a Slack channel dedicated to key users, and our efforts to make the environment as accessible as possible were among the ways we managed to get everyone on board.

Design challenges

With community support, we launched a proof-of-concept Aurora database in mid-2018 and reviewed the SQL Server environment we were dealing with.

Rationalisation

The first step was to determine how many tables we would have to migrate to Aurora and hopefully reduce that to the bare minimum. The SQL Server environment was designed as a sandbox, so there were legacy tables, temp tables, and schemas of objects no one seemed to own. After a lot of effort and a bit of nagging from me, we were able to clear out 2500 tables and free up 2TB of space.

Moving data around

The second challenge was to replace the mechanism that moved data from the data warehouse to the analyst database. Some tables have billions of rows, while others have hundreds of columns, and the SSIS process was unable to handle them. Transforming all that data from a Postgres Datawarehouse to a SQL Server database reduced efficiencies, and updating the SSIS packages that moved the data around was tedious and fiddly. We streamlined the transfer of data between Aurora and the data warehouse using Apache Airflow as our scheduler. The copying time has been reduced from over 12 hours to just two.

Searching for a scheduler

The third challenge was to find a scheduling tool that analysts could use to schedule stored procedures. Aurora does not come with a built-in job agent, so we had to explore other options.

CRON jobs were considered, but not all the analysts knew how to use the command line. We then considered setting up an Airflow server for the analysts. But with each new job added the Airflow server would need to be restarted. If every analyst in four different timezones had scheduled their own jobs, this could have been a disaster.

Ultimately, we decided to rely on what already existed. The analysts preferred to use Windows task scheduler since it provided little disruption and was a familiar tool.

Separation and security

Our last major consideration was about security and separating functional groups. The SQL Server database allowed everyone to see everything, so anyone could potentially delete anything. Our decision to create a schema for each business function ensured that tables could be created in a place dedicated to each team. Analysts could then grant access to the tables they wanted to share – because everyone shouldn’t have access to everything.

Testing and go-live

“Great job migrating all the data. Not an easy task. Thank you for all the support!”

We went from gathering requirements to user testing in six months, despite some challenges in designing the environment and moving the data around. However, the challenges don’t stop here. The new syntax, less technical users in need of more help, and queries that hit the database at once are all issues. Even so, the initial feedback was excellent.

“Wooo hooooo! Aurora is excellent. Glad we are using Postgres now”

The copying time has been reduced from 12 tedious hours to just over two hours. Analyst queries are returned much more quickly and ownership is much clearer between analysts. Migrating to Aurora has proven to be a smooth process. Getting such positive feedback from users has also been a huge accomplishment.

“It feels so much faster!

I’m sure we will encounter a few challenges but that’s part of every new system”

The future

By listening to analysts and taking a slow and steady approach, the project was complete in just nine months. We received feedback on how smoothly everything went, despite a few bumps. Analysts adapted to the new system and quickly became familiar with its quirks.

To get started with AWS, the first step is to check out the free tier. AWS provides one year of free services to get a feel for their services. Aurora is also available as Postgres and MySQL options, as well as serverless options. Once that’s done, it’s just a matter of clicking a few buttons.

Photo by Nubia Navarro (nubikini) from Pexels